8i | 9i | 10g | 11g | 12c | 13c | 18c | 19c | 21c | 23ai | Misc | PL/SQL | SQL | RAC | WebLogic | Linux

Oracle Cloud : Autonomous Database (ADW or ATP) - Load Data from an Object Store (DBMS_CLOUD)

This article demonstrates how to load data into an Autonomous Data Warehouse (ADW) or Autonomous Transaction Processing (ATP) service on the Oracle Cloud using the DBMS_CLOUD package. The Oracle documentation provides a tutorial, similar to the hands-on lab I attended at Oracle Open World 2017, described here. This article is an example of working through that tutorial.

The examples in this article are based on the Autonomous Data Warehouse (ADW), but the same method works fine for the Automated Transaction Processing (ATP) service too.

- Create a New User

- Create Tables

- Upload Data to S3

- Object Store Credentials

- Load Data from S3

- External Tables from S3

- DBMS_CLOUD

- Autonomous Transaction Processing (ATP)

Related articles.

- DBMS_CLOUD Package

- Oracle Cloud : Autonomous Data Warehouse (ADW) - Create Service

- Oracle Cloud : Autonomous Transaction Processing (ATP) - Create Service

- Oracle Cloud : Autonomous Database (ADW or ATP) - Import Data from an Object Store (impdp)

- Autonomous Database

- Cloud : All Articles

Create a New User

Log into your ADW or ATP cloud service, as described here.

Create a new user and grant it the DWROLE role.

--DROP USER my_user CASCADE; CREATE USER my_user IDENTIFIED BY "MyPassword123"; GRANT dwrole TO my_user;

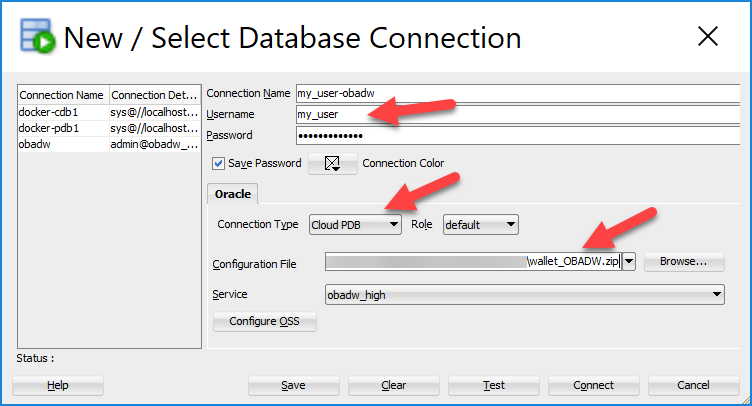

Connect to the new user. You can see how to download the credentials here. This is what my SQL Developer connection looked like.

Once you're connected to the new user you can start creating objects.

Create Tables

The next step is to create some tables. The tutorial provides a script to create the Sales History (SH) schema tables here. I didn't want the username prefix on all the objects, so I edited that script. You can grab my version of the script here (create_sh_tables.sql).

Create the tables in the script.

@create_sh_tables.sql

Upload Data to S3

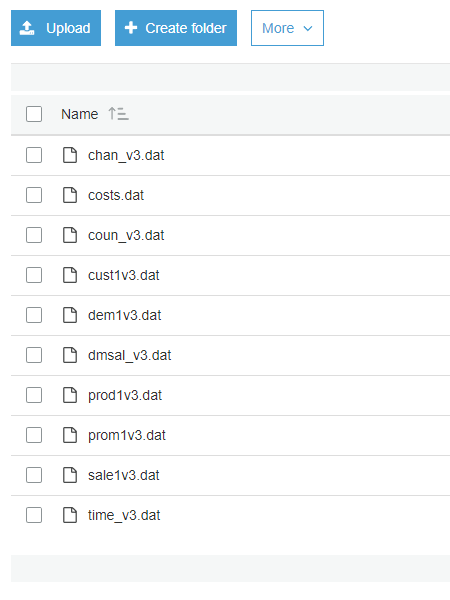

The tutorial provides a zip file containing the data files to load the tables. Download the zip file (here).

We're going to use an S3 bucket as an object store. You could use the Oracle Cloud object store as described in the documentation. Unzip the files and upload them to your S3 bucket.

Object Store Credentials

We need to create a credential containing a username and password for the connection to the object store. If you are an AWS S3 bucket the username and password are as follows.

- username : AWS access key

- password : AWS secret access key

The credentials are dropped and created using the DROP_CREDENTIAL and CREATE_CREDENTIAL procedures of the DBMS_CLOUD package respectively.

BEGIN

DBMS_CLOUD.drop_credential(credential_name => 'OBJ_STORE_CRED');

END;

/

BEGIN

DBMS_CLOUD.create_credential (

credential_name => 'OBJ_STORE_CRED',

username => 'my AWS access key',

password => 'my AWS secret access key'

) ;

END;

/

There are also DISABLE_CREDENTIAL, ENABLE_CREDENTIAL and UPDATE_CREDENTIAL procedures.

Load Data from S3

We can load a table from a file in an object store using the COPY_DATA procedure. At minimum you will have to identify the destination table, object store credential, data object URL and a format to describe how to handle the load operation. If your data doesn't match your entire column list, or the column order, you will also have to specify the field list.

BEGIN

DBMS_CLOUD.copy_data(

table_name => 'CHANNELS',

credential_name => 'OBJ_STORE_CRED',

file_uri_list => 'https://s3-eu-west-1.amazonaws.com/my-sh-data-bucket/chan_v3.dat',

format => json_object('ignoremissingcolumns' value 'true', 'removequotes' value 'true')

);

END;

/

SELECT * FROM channels;

CHANNEL_ID CHANNEL_DESC CHANNEL_CLASS CHANNEL_CLASS_ID CHANNEL_TOTAL CHANNEL_TOTAL_ID

---------- -------------------- -------------------- ---------------- ------------- ----------------

3 Direct Sales Direct 12 Channel total 1

9 Tele Sales Direct 12 Channel total 1

5 Catalog Indirect 13 Channel total 1

4 Internet Indirect 13 Channel total 1

2 Partners Others 14 Channel total 1

SQL>

The tutorial provides a script (here) to load all the tables. I've made a variation of this script that makes it a bit more generic by using variables for the credential and object store URL. You can grab my version of the script here (load_sh_tables.sql).

Run the script to load the tables.

TRUNCATE TABLE channels; VARIABLE credential_name VARCHAR2(32767) VARIABLE object_store_url VARCHAR2(32767) BEGIN :credential_name := 'OBJ_STORE_CRED'; :object_store_url := 'https://s3-eu-west-1.amazonaws.com/my-sh-data-bucket/'; END; / @load_sh_tables.sql

You can check on the status of the copy operations by querying the USER_LOAD_OPERATIONS view.

SELECT * FROM user_load_operations WHERE type = 'COPY' ORDER BY id;

The LOGFILE_TABLE and BADFILE_TABLE columns contain the names of external tables for accessing the log file and bad file related to each load operation. The external table names are in the form of COPY$1_LOG, COPY$1_BAD with an incrementing integer value. You can check the log and bad files as follows.

SELECT * FROM copy$1_log; SELECT * FROM copy$1_bad;

You can clean up after a load operation using either the DELETE_OPERATION or the DELETE_ALL_OPERATIONS procedure.

BEGIN DBMS_CLOUD.delete_operation(id => 1); DBMS_CLOUD.delete_all_operations(type => 'COPY'); END; /

External Tables from S3

In addition to loading data, we can also create external tables against data stored in object stores using the CREATE_EXTERNAL_TABLE procedure. It's similar to the COPY_DATA procedure shown above, but also includes a mandatory column list.

BEGIN

DBMS_CLOUD.create_external_table(

table_name => 'CHANNELS_EXT',

credential_name => 'OBJ_STORE_CRED',

file_uri_list => 'https://s3-eu-west-1.amazonaws.com/my-sh-data-bucket/chan_v3.dat',

column_list => 'CHANNEL_ID NUMBER,

CHANNEL_DESC VARCHAR2(20),

CHANNEL_CLASS VARCHAR2(20),

CHANNEL_CLASS_ID NUMBER,

CHANNEL_TOTAL VARCHAR2(13),

CHANNEL_TOTAL_ID NUMBER',

format => json_object('ignoremissingcolumns' value 'true', 'removequotes' value 'true')

);

END;

/

SELECT * FROM channels_ext;

CHANNEL_ID CHANNEL_DESC CHANNEL_CLASS CHANNEL_CLASS_ID CHANNEL_TOTAL CHANNEL_TOTAL_ID

---------- -------------------- -------------------- ---------------- ------------- ----------------

3 Direct Sales Direct 12 Channel total 1

9 Tele Sales Direct 12 Channel total 1

5 Catalog Indirect 13 Channel total 1

4 Internet Indirect 13 Channel total 1

2 Partners Others 14 Channel total 1

SQL>

DBMS_CLOUD

The documentation about the DBMS_CLOUD package is a little lite, with most of the routines in the package undocumented. A description of the package reveals the following routines, some with overloads. Many of the routines are used for local file and remote object manipulation.

SQL> desc DBMS_CLOUD PROCEDURE COPY_DATA Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- TABLE_NAME VARCHAR2 IN CREDENTIAL_NAME VARCHAR2 IN FILE_URI_LIST CLOB IN SCHEMA_NAME VARCHAR2 IN FIELD_LIST CLOB IN FORMAT CLOB IN PROCEDURE COPY_DATA Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- TABLE_NAME VARCHAR2 IN CREDENTIAL_NAME VARCHAR2 IN FILE_URI_LIST CLOB IN OPERATION_ID NUMBER OUT SCHEMA_NAME VARCHAR2 IN FIELD_LIST CLOB IN FORMAT CLOB IN PROCEDURE CREATE_CREDENTIAL Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- CREDENTIAL_NAME VARCHAR2 IN USERNAME VARCHAR2 IN PASSWORD VARCHAR2 IN TENANCY_OCID VARCHAR2 IN USER_OCID VARCHAR2 IN PRIVATE_KEY VARCHAR2 IN PUBLIC_KEY VARCHAR2 IN REGION VARCHAR2 IN PROCEDURE CREATE_EXTERNAL_TABLE Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- TABLE_NAME VARCHAR2 IN CREDENTIAL_NAME VARCHAR2 IN FILE_URI_LIST CLOB IN COLUMN_LIST CLOB IN FIELD_LIST CLOB IN FORMAT CLOB IN PROCEDURE DELETE_ALL_OPERATIONS Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- TYPE VARCHAR2 IN PROCEDURE DELETE_FILE Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- DIRECTORY_NAME VARCHAR2 IN FILE_NAME VARCHAR2 IN PROCEDURE DELETE_OBJECT Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- CREDENTIAL_NAME VARCHAR2 IN OBJECT_URI VARCHAR2 IN PROCEDURE DELETE_OPERATION Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- ID NUMBER IN PROCEDURE DISABLE_CREDENTIAL Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- CREDENTIAL_NAME VARCHAR2 IN PROCEDURE DROP_CREDENTIAL Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- CREDENTIAL_NAME VARCHAR2 IN PROCEDURE ENABLE_CREDENTIAL Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- CREDENTIAL_NAME VARCHAR2 IN FUNCTION GET_METADATA RETURNS CLOB Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- CREDENTIAL_NAME VARCHAR2 IN OBJECT_URI VARCHAR2 IN FUNCTION GET_OBJECT RETURNS BLOB Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- CREDENTIAL_NAME VARCHAR2 IN OBJECT_URI VARCHAR2 IN STARTOFFSET NUMBER IN ENDOFFSET NUMBER IN COMPRESSION VARCHAR2 IN FUNCTION GET_OBJECT RETURNS BLOB Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- CREDENTIAL_NAME VARCHAR2 IN OBJECT_URI VARCHAR2 IN DIRECTORY_NAME VARCHAR2 IN FILE_NAME VARCHAR2 IN STARTOFFSET NUMBER IN ENDOFFSET NUMBER IN COMPRESSION VARCHAR2 IN FUNCTION LIST_FILES RETURNS TABLE Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- OBJECT_NAME VARCHAR2(4000) OUT BYTES NUMBER OUT DIRECTORY_NAME VARCHAR2 IN FUNCTION LIST_OBJECTS RETURNS TABLE Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- OBJECT_NAME VARCHAR2(4000) OUT BYTES NUMBER OUT CREDENTIAL_NAME VARCHAR2 IN LOCATION_URI VARCHAR2 IN PROCEDURE PUT_OBJECT Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- CREDENTIAL_NAME VARCHAR2 IN OBJECT_URI VARCHAR2 IN CONTENTS BLOB IN COMPRESSION VARCHAR2 IN PROCEDURE PUT_OBJECT Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- CREDENTIAL_NAME VARCHAR2 IN OBJECT_URI VARCHAR2 IN DIRECTORY_NAME VARCHAR2 IN FILE_NAME VARCHAR2 IN COMPRESSION VARCHAR2 IN PROCEDURE UPDATE_CREDENTIAL Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- CREDENTIAL_NAME VARCHAR2 IN ATTRIBUTE VARCHAR2 IN VALUE VARCHAR2 IN PROCEDURE VALIDATE_EXTERNAL_TABLE Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- TABLE_NAME VARCHAR2 IN SCHEMA_NAME VARCHAR2 IN ROWCOUNT NUMBER IN STOP_ON_ERROR PL/SQL BOOLEAN IN PROCEDURE VALIDATE_EXTERNAL_TABLE Argument Name Type In/Out Default? ------------------------------ ----------------------- ------ -------- TABLE_NAME VARCHAR2 IN OPERATION_ID NUMBER OUT SCHEMA_NAME VARCHAR2 IN ROWCOUNT NUMBER IN STOP_ON_ERROR PL/SQL BOOLEAN IN

Autonomous Transaction Processing (ATP)

The methods for loading tables with data from an object store and creating external tables against files in an object store are the same for the Autonomous Transaction Processing (ATP) service as the Autonomous Data Warehouse (ADW) service.

Remember you are able to create a variety of access structures in ATP that you can't in ADW.

For more information see:

- Autonomous Data Warehouse Cloud Tutorials

- DBMS_CLOUD

- DBMS_CLOUD Package

- Oracle Cloud : Autonomous Data Warehouse (ADW) - Create Service

- Oracle Cloud : Autonomous Transaction Processing (ATP) - Create Service

- Oracle Cloud : Autonomous Database (ADW or ATP) - Import Data from an Object Store (impdp)

- Autonomous Database

- Cloud : All Articles

Hope this helps. Regards Tim...